Control Surfaces for AI: The Operator Interface Nobody Budgets For

Autonomy Doesn’t Fail in the Model. It fails where truth is late and actions are irreversible.

Summary: Autonomy doesn’t fail in the model — it fails in the boring places where truth is late and irreversible actions propagate. If you can’t observe, explain, override, rollback, and prove what happened, you don’t have autonomy — you have unmanaged risk with a UI. Here’s what executives should fund first: evidence, exception handling, real overrides, tested rollback, and reality-grade observability.

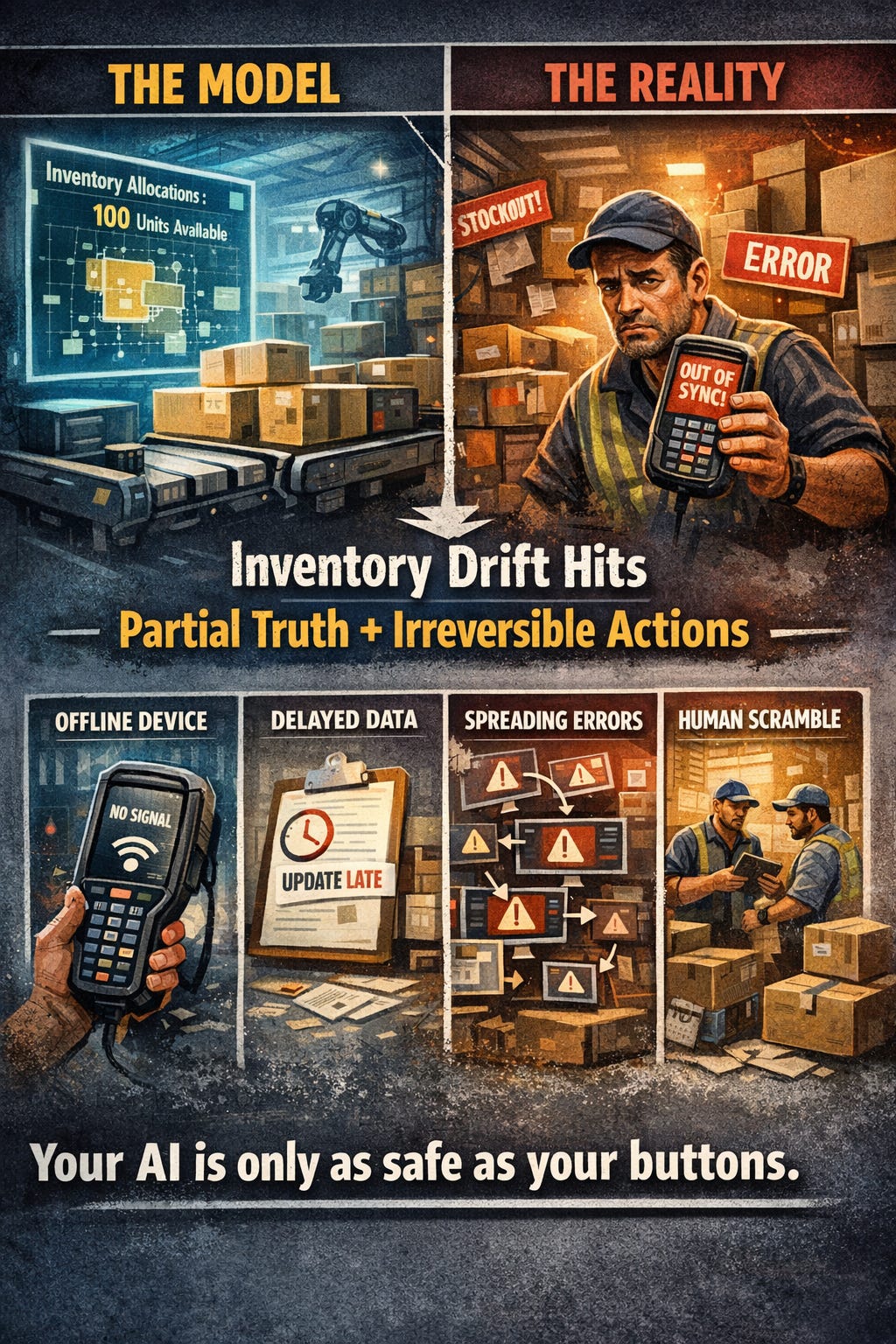

Opening (Reality vs Model) — inventory drift is where “autonomy” gets real

Your AI is only as safe as your buttons.

Here’s the unglamorous place where autonomy actually breaks: state-changing actions taken under partial truth — decisions that are locally correct in the moment, then re-litigated later when more data arrives.

The failure mode is simple: partial truth + irreversible state change + integration propagation.

If you build systems for real operations, you know this pattern:

the edge is offline or interrupted,

the data is late,

the action is already experienced by the customer or the warehouse,

and integration propagates the consequences before the “full truth” shows up.

Inventory movements are just the cleanest example because they’re irreversible enough to hurt, common enough to matter, and integrated enough to propagate.

A picker scans items and confirms quantities. The handheld is offline (or the connection is weak, or the shift is moving too fast). The system makes a decision locally — *this is what happened* — and business continues on that basis: allocations, replenishment, promises to customers.

Then the device syncs later.

Now a centrally-biased ruleset replays the world as if it were clean: dedupe logic, late validations, ERP “truth” corrections. The system rewrites yesterday’s reality with today’s information.

What looked like a single inventory movement becomes a cascade:

reservations get churned and re-assigned,

replenishment triggers fire (or don’t) based on rewritten states,

pick waves break and operators get sent back into the aisle,

stockouts appear on paper while inventory is physically somewhere else,

exceptions pile up and humans spend hours reconciling what the system *now* claims happened.

Not because anyone was careless — because the system treated late truth as if it were the only truth.

The model didn’t fail. The missing layer did.

Because when this happens, most orgs discover they have no control surface:

they can’t see exactly what the system believed at decision time,

they can’t explain why it was allowed,

they can’t override it without side effects,

they can’t rollback cleanly,

and they can’t prove the chain of causality without a forensic dig.

If you can’t observe, explain, override, rollback, and prove what happened, you don’t have autonomy — you have unmanaged risk with a UI.

The thesis - executive version

You don’t need more intelligence — you need controllability.

In operations, autonomy is not a model capability. It’s a system property.

And the limiting factor isn’t whether an AI can propose the right action. It’s whether the organization can:

keep decision-making inside explicit boundaries,

see and stop failure early,

reverse the blast radius,

and produce evidence when the system is questioned (internally, by partners, or by regulators).

That layer is what I call a control surface. It’s the operator interface + governance primitives that make automation safe to entrust.

What to fund first - before you fund “more autonomy”

If you’re sponsoring autonomy in a real operation (warehouse, field service, last-mile, infrastructure), the safest question to ask is not “how accurate is the model?”

Ask: what will we do when the system is wrong, late, or under partial truth?

Fund these first — before you fund more autonomy.

1) Evidence , so you can explain what happened

You need an event trail that makes “who knew what, when?” answerable without a forensic dig.

If inventory drift is your reality, evidence is the difference between reconciling and arguing.

Example: a mobile device posts a pick confirmation offline at 10:07. The ERP receives it at 11:42. In between, the system made replenishment and reservation decisions based on what it believed at the time. When quantities later “correct,” the question isn’t “who is wrong?” — it’s what did the system know when it acted, and what changed after?

Minimum:

decision event logs (inputs used, what was stale/missing, thresholds)

state transitions (before/after)

reason codes tied to policy/constraints

linkage from decision → action → downstream records

A practical test: can an operator (or auditor) reconstruct the timeline in under 5 minutes without asking engineers to dig through logs?

2) Exception handling as a first-class product surface

Exceptions are not an edge case. They’re where operations spend money.

Example: a late sync changes available quantity and the system can’t reconcile reservations cleanly. If that turns into a generic “inventory mismatch” alert, it becomes pure noise. Operators either ignore it (and the mismatch becomes a customer issue later) or they stop trusting the system entirely.

A governable system routes exceptions like work, not like drama:

classify the exception (what kind of mismatch is this?),

assign ownership (who is accountable to resolve it?),

set an SLA (how long can we tolerate it?),

define a safe default (what happens if nobody touches it?),

and capture feedback that improves the policy (so the same exception happens less).

Fund:

an operator queue that supports triage

clear ownership and SLAs for exception types

safe defaults when nobody responds

feedback capture that improves policy (not blame)

3) Override with authority

A “manual approval step” is not governance if it’s a dumpster.

Example: inventory sync arrives late and the system wants to auto-correct quantities and re-run allocations. If the only override is a generic approval popup, operators will do one of two things:

approve everything to clear the queue (because the shift is moving), or

bypass the feature entirely (because it creates work).

A real override is closer to a safety control:

it has scope (“stop *this* adjustment, not the whole warehouse”),

it has consequences (“if vetoed, route to exception type X”),

and it has a safe default (what happens if nobody responds).

Fund:

veto power with clear accountability

escalation paths when vetoed

policy-level constraints (what is never allowed)

rate limits / quotas to prevent rubber-stamping

4) Rollback (or compensation) that’s actually tested

A kill switch without rollback is theatre.

Inventory is a perfect example because you rarely get a clean “undo.”

If the system auto-posts an adjustment and that adjustment triggers downstream work (replenishment tasks, re-reservations, re-picks), rolling back the original adjustment isn’t enough — you need compensation that unwinds the operational consequences.

This is where autonomy projects quietly die: the model can decide, but the organization cannot reverse the blast radius without heroics.

Fund:

explicit rollback/compensation workflows per action type

time windows where rollback is clean

ownership of rollback when customer/inventory/finance are impacted

idempotency, retries, and dedupe designed as control, not plumbing

A practical gate: for every automated action, write down the rollback path before you ship it. If you can’t, that workflow is not ready for autonomy.

5) Observability that matches operational reality

Dashboards are not observability if they hide latency and partial truth.

Fund:

timing markers (when data was captured vs when it arrived)

confidence/uncertainty surfaced in operational terms

drift detection (what changed after the decision)

Rule of thumb: if you can’t fund evidence + rollback, you can’t afford autonomy.

What not to fund first (common traps)

These look productive in slide decks and fail in the field:

“More intelligence” without controls (you scale failure faster)

Autonomy in irreversible workflows (inventory, finance, customer promises) without rollback

Manual approvals as a safety blanket (becomes blame-in-the-loop)

A control surface built after the incident (the worst time to design governance)

Minimal reference architecture (so this is buildable)

Keep it simple. You’re not buying “an AI model.” You’re buying a governed decision pipeline.

Minimum components:

Decision service: proposes/acts, but only inside explicit bounds

Policy/constraints layer: the real authority (what is allowed, when, and under what evidence)

Evidence log: durable decision events + inputs + reason codes + thresholds

State model: explicit state transitions (before/after) with IDs that link downstream

Exception queue” triage + ownership + SLAs + safe defaults

Override controls: veto/escalate with accountability (not a ceremonial approval)

Rollback/compensation workflows: tested undo paths for each action class

Monitoring: latency + partial truth + drift (what changed after the decision)

If you can’t point to these parts (even if they’re thin at first), autonomy will become a collection of one-off behaviors you can’t govern.

Define “control surface” (non-hype)

A control surface is the operator interface + governance layer that makes automation:

observable (you can see what it did, and what changed)

explainable (you can see why it believed it was allowed)

overrideable (you can stop it or change the decision authority)

reversible (you can rollback or compensate without a forensic dig)

provable (you can audit and reconstruct the timeline)

If those primitives don’t exist, you don’t have a system you can entrust.

The 5 primitives (compressed)

This is the control surface in one screen.

Observe: decisions + actions logged, with before/after state and timing (what was stale/missing).

Explain: reason codes tied to policy/constraints + the evidence used at decision time.

Override: real veto and escalation paths, with safe defaults (not approval theatre).

Rollback: tested undo/compensation paths with owners and time windows.

Prove: a minimal audit trail that reconstructs the timeline without a forensic dig.

Autonomy maturity ladder (4 levels)

This is how you prevent “we shipped autonomy” from outrunning governance.

1) Manual: humans decide + act. Evidence is implicit.

2) Assisted: system proposes; human acts. Evidence must be visible.

3) Supervised: system acts inside bounds; humans handle exceptions. Rollback is defined.

4) Bounded autonomy: system acts with explicit constraints, evidence, and tested rollback.

Gate rule: you only move up a level when the control surface is stronger than the autonomy.

Artifact: Control Surface Checklist + Autonomy Maturity Ladder

Control Surface Checklist (copy/paste)

For every automated decision/action, answer:

Observe

What decision event is logged (with IDs linking to downstream records)?

What state changed (before/after)?

What inputs were used (and which were stale/missing)?

Explain

What policy/constraint allowed the action?

What evidence was used at the moment of decision?

What uncertainty existed (and what threshold was used)?

Override

Who can veto/change the decision?

What is the escalation path if vetoed?

What happens if nobody responds (safe default)?

Rollback

What is the rollback/compensation path?

What is the time window where rollback is clean?

Who owns rollback when customer/finance/inventory are impacted?

Prove

Can we reconstruct the full timeline in <5 minutes?

Can we answer “who knew what, when, and what changed after?”

Autonomy Maturity Ladder (4 levels)

1) Manual: humans decide + act. Evidence is implicit.

2) Assisted: system proposes; human acts. Evidence must be visible.

3) Supervised: system acts inside bounds; humans handle exceptions. Rollback is defined.

4) Bounded autonomy: system acts with explicit constraints, evidence, and tested rollback.

Gate rule: you only move up a level when the control surface is stronger than the autonomy.

Close

If you’re serious about autonomy in real operations, treat the control surface as the product.

Models will keep getting better. That’s not the bottleneck.

The bottleneck is whether your system remains governable when reality is late, partial, and messy — and whether your operators have the tools to keep the business moving without turning every exception into a war room.

Fund controllability first. Autonomy can only grow safely on top of it.

Discussion questions

Where in your systems does automation exist without a rollback path?

What’s your biggest control-surface debt today?

If you shipped autonomy tomorrow, what would your operators do: trust it, rubber-stamp it, or work around it?